Codex Data Source (Beta)

⚠️ Codex log support is experimental while the Codex CLI log format continues to evolve.

ccusage can read OpenAI Codex CLI session logs as one of its supported local data sources. Codex uses the same unified and focused report model as Claude Code, OpenCode, Amp, and pi-agent.

Focused Views

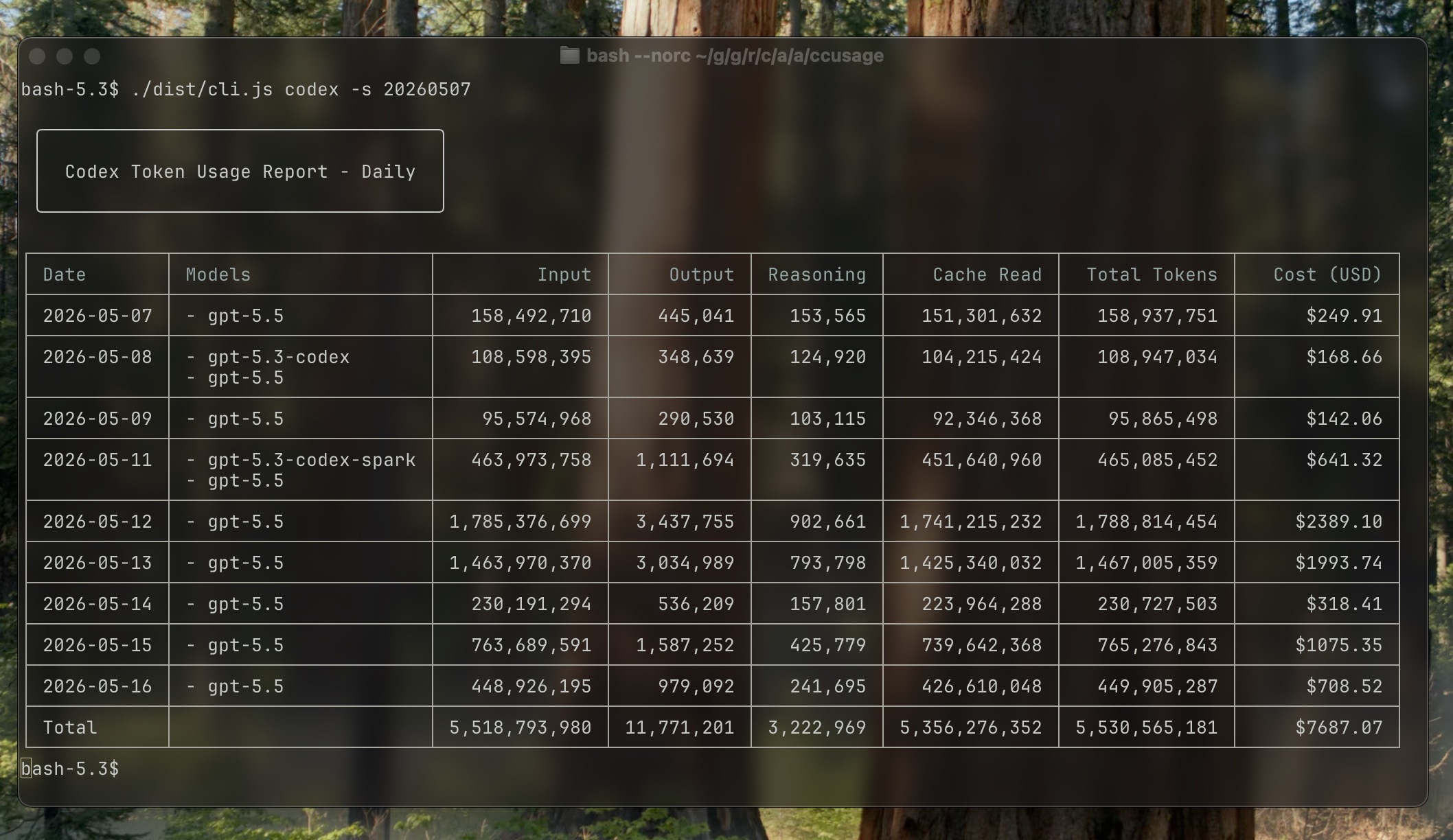

# Daily Codex usage

ccusage codex daily

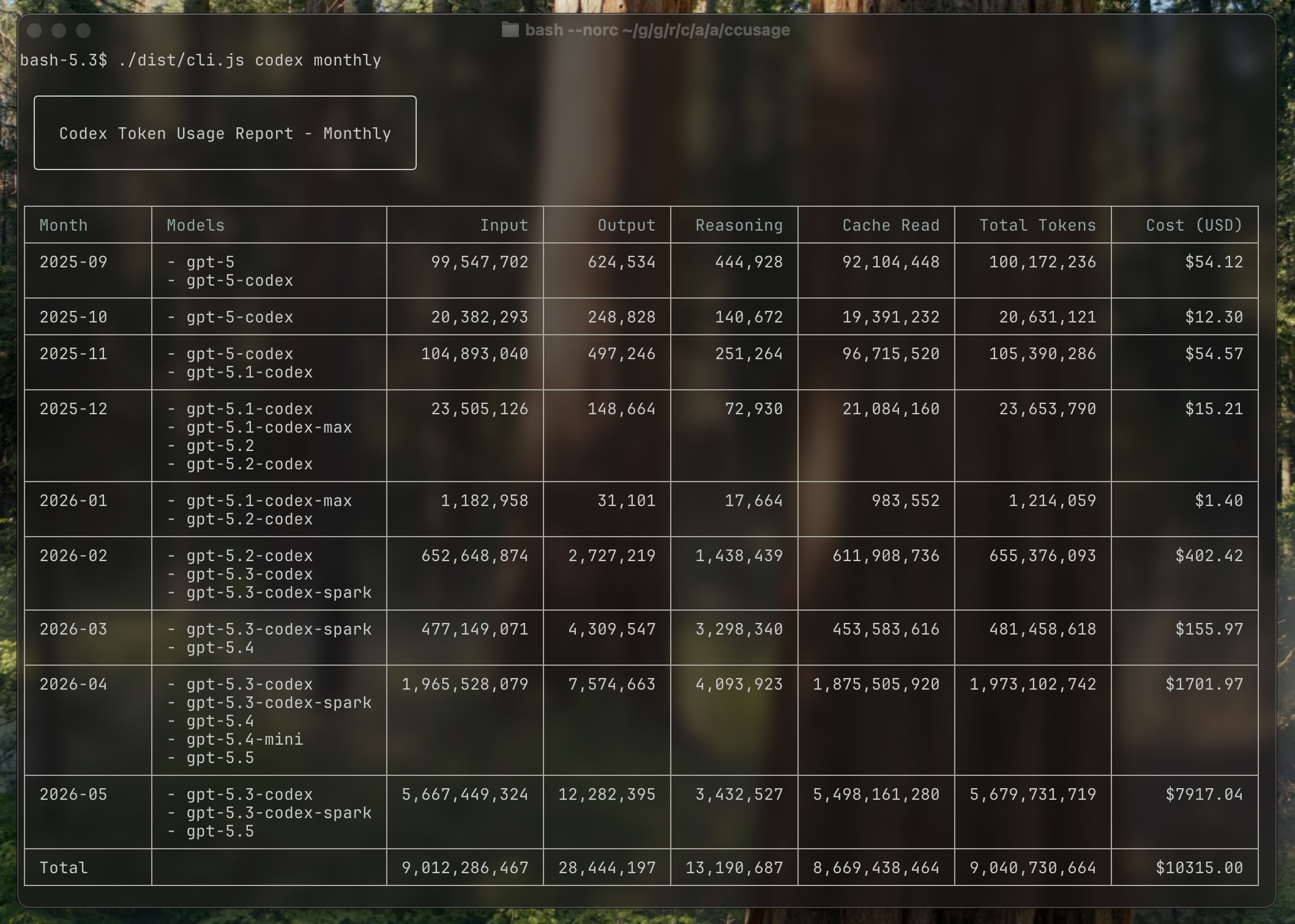

# Monthly Codex usage

ccusage codex monthly

# Codex sessions

ccusage codex sessionMost users can start with unified reports such as ccusage daily. Add the codex namespace only when you want to focus the same report shape on Codex usage or pass Codex-specific options such as --speed.

Data Source

The CLI reads Codex session JSONL files located under CODEX_HOME (defaults to ~/.codex). CODEX_HOME can be one directory or a comma-separated list of directories. Each file represents a single Codex CLI session and contains running token totals that the tool converts into per-day or per-month deltas.

CODEX_HOME="$HOME/.codex,$HOME/.codex-work" ccusage codex dailyReport Views

| Focused view | Description | See also |

|---|---|---|

ccusage codex daily | Aggregate usage by date | Daily Usage |

ccusage codex monthly | Aggregate usage by month | Monthly Usage |

ccusage codex session | Group usage by Codex session | Session Usage |

These views support --json, --compact, --offline, and --speed auto|standard|fast.

Monthly Example

What Gets Calculated

- Token deltas – Each

event_msgwithpayload.type === "token_count"reports cumulative totals. The CLI subtracts the previous totals to recover per-turn token usage (input, cached input, output, reasoning, total). - Per-model grouping – The

turn_contextmetadata specifies the active model. We aggregate tokens per day/month and per model. Sessions lacking model metadata (seen in early September 2025 builds) are skipped. - Pricing – Rates come from LiteLLM's pricing dataset via the shared

LiteLLMPricingFetcher. Model aliases such asgpt-5.5are resolved through the pricing cache when available. - Speed pricing –

--speed autois the default. It readsconfig.tomlfrom eachCODEX_HOMEroot and applies fast pricing when any Codex config hasservice_tier = "priority"or legacyservice_tier = "fast"configured. Fast mode uses the model-specific LiteLLM multiplier when available and otherwise falls back to 2x pricing. Pass--speed fastor--speed standardto override config-based detection. - Legacy fallback – Early September 2025 logs that never recorded

turn_contextmetadata are still included; the CLI assumesgpt-5for pricing so you can review the tokens even though the model tag is missing (the JSON output also marks these rows with"isFallback": true). - Cost formula – Non-cached input uses the standard input price; cached input uses the cache-read price (falling back to the input price when missing); and output tokens are billed at the output price. All prices are per million tokens. Reasoning tokens may be shown for reference, but they are part of the output charge and are not billed separately.

- Totals and reports – Daily, monthly, and session views display per-model breakdowns, overall totals, and optional JSON for automation.

Environment Variables

| Variable | Description |

|---|---|

CODEX_HOME | Override the root directory, or comma-separated root directories, containing Codex session folders |

LOG_LEVEL | Adjust log verbosity (0 silent … 5 trace) |

When Codex emits a model alias (for example gpt-5.5), the CLI automatically resolves it through the LiteLLM pricing data when possible. No manual override is needed.

Speed Pricing

Codex logs usually do not include whether a turn used fast mode. By default, ccusage codex uses --speed auto, reads config.toml from each CODEX_HOME root, and treats service_tier = "priority" or legacy service_tier = "fast" as fast pricing when any configured root opts into it. Fast mode uses the model-specific LiteLLM multiplier when available and otherwise falls back to 2x pricing.

# Default: read Codex config.toml

ccusage codex daily --speed auto

# Force fast pricing

ccusage codex daily --speed fast

# Force standard pricing

ccusage codex daily --speed standardJSON Output

Codex focused views use the same JSON mode as the shared reports:

ccusage codex daily --json

ccusage codex monthly --json

ccusage codex session --jsonSession JSON includes per-model breakdowns, cached token counts, lastActivity, and isFallback flags for any events that required the legacy gpt-5 pricing fallback.

Have feedback or ideas? Open an issue so we can improve Codex support.

Troubleshooting

Why are there no entries before September 2025?

OpenAI's Codex CLI started emitting token_count events in commit 0269096 (2025-09-06). Earlier session logs simply don't contain token usage metrics, so ccusage codex has nothing to aggregate. If you need historic data, rerun those sessions after that Codex update.

What if some September 2025 sessions still get skipped?

During the 2025-09 rollouts a few Codex builds emitted token_count events without the matching turn_context metadata, so the CLI could not determine which model generated the tokens. Those entries are ignored to avoid mispriced reports. If you encounter this, relaunch the Codex CLI to generate fresh logs—the current builds restore the missing metadata.